Tech moves fast, but you're still playing catch-up?

That's exactly why 200K+ engineers working at Google, Meta, and Apple read The Code twice a week.

Here's what you get:

Curated tech news that shapes your career - Filtered from thousands of sources so you know what's coming 6 months early.

Practical resources you can use immediately - Real tutorials and tools that solve actual engineering problems.

Research papers and insights decoded - We break down complex tech so you understand what matters.

All delivered twice a week in just 2 short emails.

Last time, PCA cleaned up the chaos like a productivity influencer with a color-coded planner. Elegant, efficient, and obsessed with straight lines.

But today’s contestant?

They look at straights and say:

“Respectfully, I prefer vibes.”

Please welcome t-SNE, which actually stands for t-Distributed Stochastic Neighbor Embedding. I know it’s a mouthful.

t-SNE: The algorithm that will keep your friends group together at a party.

High-dimensional data are weird places.

Imagine thousands of data points living in a space with hundreds of features. We humans, can’t visualize that. Even anything above 3D is too much for our monkey brains.

t-SNE steps in and says, “Don’t worry. I’ll turn this weird place into something you can actually look at.”

t-SNE is a nonlinear dimensionality reduction technique designed primarily for visualization.

Instead of preserving overall geometry like PCA, t-SNE focuses on one mission: similar points should stay close together.

That’s it. That’s the obsession.

It builds visual maps where clusters naturally appear, often revealing hidden structure you didn’t even know existed.

🧠 Play By Play: How t-SNE Works

Let’s walk through what happens behind the scenes.

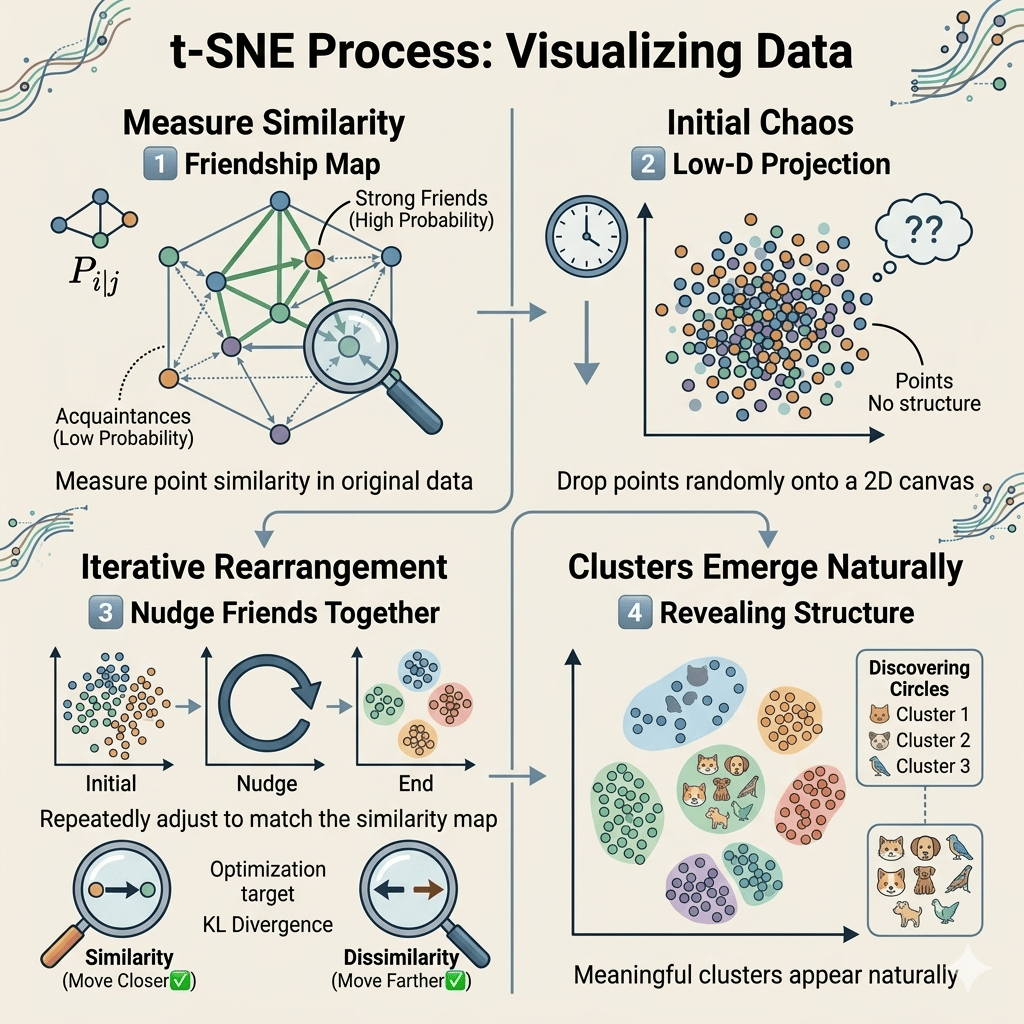

1️⃣ Measure Who’s Similar to Whom

t-SNE starts by looking at your original high-dimensional data and asking: “Which points are neighbors?”

It calculates probabilities that describe how likely one point would “pick” another as its neighbor.

Think of it like social preferences:

Close points → strong friendship probability

Far points → barely acquaintances

The algorithm builds a giant friendship map.

2️⃣ Drop Everything Into 2D Randomly

Next, t-SNE throws all points onto a 2D (or 3D) canvas.

At first, it looks like chaos.

No structure. No clusters. Just vibes and confusion.

Basically, the first draft of any group project.

3️⃣ Rearrange Until Friend Groups Make Sense

Now the magic begins.

t-SNE repeatedly nudges points around so that:

Similar points move closer together.

Dissimilar points move farther apart.

It keeps adjusting positions until the relationships in 2D resemble the relationships from the original high-dimensional space.

Imagine rearranging people at a party until everyone ends up standing near their actual friends.

Awkward at first. Accurate by the end.

4️⃣ Clusters Emerge Naturally

Eventually, something cool happens:

Clusters appear.

Not because we told the algorithm to create groups — but because similarity naturally pulls points together.

This is why t-SNE plots are famous in machine learning papers. You’ll often see colorful blobs representing categories that were hidden before visualization.

It’s like discovering your dataset secretly had friend circles all along.

TLDR: The t-SNE Mood Board

Step | What PCA Does | Vibe |

|---|---|---|

1 | Measures similarity between points. | “Who’s friends?” |

2 | Places points randomly in 2D. | “We’ll figure it out.” |

3 | Moves points to preserve neighbors. | “Stand next to your people.” |

4 | Forms clusters naturally. | “Friend groups unlocked.” |

5 | Creates a visualization humans understand. | “Finally makes sense.” |

Conclusion

Where PCA brought discipline and structure, t-SNE brings intuition.

It doesn’t try to summarize everything perfectly. Instead, it preserves what humans care about most when looking at data: relationships.

t-SNE turns abstract high-dimensional math into something visual, interpretable, and surprisingly human.

It reminds us that sometimes understanding data isn’t about simplifying every detail…

…it’s about seeing who belongs together.

Next episode, our next contestant enters the stage — an algorithm that combines probability, clustering, and a little bit of Bayesian mystery: Gaussian Mixture Models.

Stay tuned. The auditions continue.